Users want their digital experiences to be fast, reliable, and secure across mobile applications, web pages, and APIs (Application Programming Interfaces). Advanced technologies and easy internet access have made customers dependent on instant services. A few seconds of delay or a hang-up when interacting with web pages or conducting a transaction are unforgivable and result in a bad customer experience. Given the competitive market space, customers don’t find it difficult to move on to your rival if they experience a poor digital service. They don’t expect anything short of an outstanding digital presence with reliable accessibility regardless of time and location.

Although businesses try to keep their customers delighted with impressive customer experience, high availability and faster loading time of sub-pages are rather tricky to achieve. To offer a reliable digital experience, organizations need to monitor how applications are responding to end users’ requests. They must seek full visibility into everything—web objects, third-party integrations, browser codes, and internet infrastructure—that impacts the performance, speed, accessibility, and customer satisfaction.

Synthetic Monitoring

Synthetic Monitoring, also known as Synthetic Testing, is the application performance management strategy that provides the required visibility into the end-user experience. It is a monitoring approach where you simulate user interactions with your digital assets to test and track uptime, business transactions, and user journey through the app. The simulated traffic is given a set of variables like device types, geographical location, browser, and internet network.

A perfectly executed synthetic monitoring plan helps you to offer a delightful customer experience with consistent services across customer’s digital touchpoints. The active monitoring approach gives you a clear picture of the gaps in your website or application performance using scripts to replicate user behavior. The results it generates give you insights into:

- Application performance

- Uptime or downtime

- Webpage load time

- Third-party component performance

- Transaction failures

How Does Synthetic Monitoring Work?

synthetic monitoring lets you use real devices through your application by triggering a bot via automated and scripted transactions. This simulation is implemented with a variety of variables to understand the application performance across browsers and devices. Conducted in a private test ecosystem and a network operation center (NOC), the simulation imitates the end user’s journey through form submissions, account creations, app navigations, eCommerce transactions, or other operational navigations to test the functionality of new features and updates.

The test ideally runs every 10 – 15 minutes from a single browser or multiple browsers, spread across various locations, to generate a holistic behavioral pattern. The results of this direct testing are shared with you for further analysis. If the flow faces an error, the test is rerun by the monitoring system before escalating the problem. Synthetic monitoring can be executed within your firewall to specifically test the performance of the internal system or outside the security system to get a broad view.

Although Real User Measurement is another monitoring technique popularly used, synthetic monitoring is a go-to technique as it helps you with data that can be used to determine alert thresholds for any performance issues or failures.

Unlike synthetic monitoring, real user monitoring is a passive testing method that lets you track actual end-user experience with your website or application. It enables you to track how users travel through your application, the pages they interact with, and where they drop off.

Synthetic Monitoring – Trends & Predictions

To impress tech-savvy customers with their web applications, organizations implement complex code, intricate infrastructure, and a buffet of services. This intricacy has made synthetic testing mandatory, which is quite evident from the market trends. The synthetic monitoring market is expected to grow to a whopping $1944.7 million by 2026 after it was valued at $838.5 million in 2020, according to Reportlinker. It also claimed that more than 40% of users don’t wait for more than 3 seconds for a page to load. This delay could cost an eCommerce brand about $1.6 billion in losses, the report estimated.

More companies are leaning towards building complex applications with the advent of DevOps and cloud-based ecosystems. Synthetic monitoring fits the bill for the business need to implement a proactive monitoring strategy to govern on-premises, cloud, and hybrid infrastructure. The IT, FinTech, and telecom industries, specifically, are likely to witness a significant increase in synthetic monitoring adoption as their dependency on API integrations is growing considerably.

Cloud-based Synthetic Monitoring

Cloud-native applications add to the complexity of application testing though they provide a higher level of accessibility and performance potential. These applications often comprise multiple microservices and containers distributed across various servers. Such an ecosystem features dynamic topologies, varying SLA requirements that user requests have to go through, and constantly changing container instances. Synthetic monitoring forms an ideal testing approach as it implements continuous, 360-degree monitoring every few minutes in both pre-deployment and production stages. This allows you to identify issues in the initial stages and improve the end user experience.

Observability is a key capability for DevOps and cloud-native teams to actively debug their applications. Its basis is formed by measuring patterns that are not predefined, i.e., by seeking insights from metrics, logs, and tracing. These three factors are considered the three pillars of observability, which help detect performance degradations, bugs, and outages across the distributed systems.

Reasons Why Synthetic Monitoring is an Asset

- Detect & resolve issues early

With synthetic monitoring, you replicate an end user’s interaction with your website or application from distinct locations and devices. This allows you to detect errors and bugs within or before the production stage as well as the postproduction stage because unique environmental issues continue to crop up. Carefully monitoring your application workflows, APIs, and other components enables you to find the underlying cause of performance challenges and resolve them.

2. Improve availability

Often, even though traditional testing procedures promise high performance of the applications, even a slight change in traffic volume could lead to hang-ups and service failures. This is because most testing strategies don’t consider high traffic scenarios. With synthetic monitoring, however, you can simulate instances of peak traffic periods or direct high volumes of requests and vice versa to a particular service to check its availability. You can implement this testing with a variety of test variables.

3. Monitor complex business processes

When striving to offer a high-performance application, it is compulsory that you conduct end-to-end monitoring of complex transactions and business processes, such as logging in, registration, shopping cart, and browsing through product pages. Monitoring these journeys across different geographical locations, devices, and browsers will help you optimize the navigation by identifying the key areas for improvement.

4. Set realistic SLAs

Synthetic monitoring gives you a wholesome perspective of your application’s performance, availability, reliability, and limitations. This data will enable you to set appropriate SLAs and adhere to them without any challenges or compliance issues.

5. Keep third-party vendors in check

Web or mobile applications consist of multiple third-party components and capabilities, such as payments gateways, recommendation plugins, and site search features. Synthetic monitoring tests service level objections and alerts you of any performance issues so that you can engage with your vendors with data-backed complaints.

6. End-user-centric testing

The market today is riddled with various tools and platforms, both open-source and paid, that help you with scripted scenarios and workflows. They either offer manual line-by-line scripting or script recording tools. While open-source options are free, they feature time-consuming processes to create scripts. This might come as a con for organizations looking for a faster development process.

The primary reason why synthetic monitoring is gaining such prominence is that it tests your applications from an end-user point of view. Using a wide array of factors like location, user device, and internet service gives you rich and insightful data, including response time, page load, and other web vitals, to assess the performance from real use-case scenarios.

7. Benchmark Performance

The data produced during the multiple, repeated tests give you enough understanding of your application to baseline performance thresholds. This data not only highlights the problematic components in your system or code but also helps you define performance enhancement strategies. You can also use the data to benchmark your application performance and reliability against your competitors.

For instance, let’s say the load time of your mobile banking application is 4 seconds. And it is ranked at a certain level among similar banking applications on Google Play Store or App Store. Now consider a competitor’s banking app which is placed in the top position of the search engine and has a higher ranking.

A feature-rich synthetic monitoring tool, such as QualityKiosk’s proprietary CX monitoring platform QTRAC, helps you not only resolve the application issues discussed above but also explains how your application can rank better on the mobile app stores through competitive benchmarking. In this case, QTRAC gives you actionable insights on how you can reduce your application’s load time to 3 seconds and provide a fast, frictionless customer experience.

QTRAC

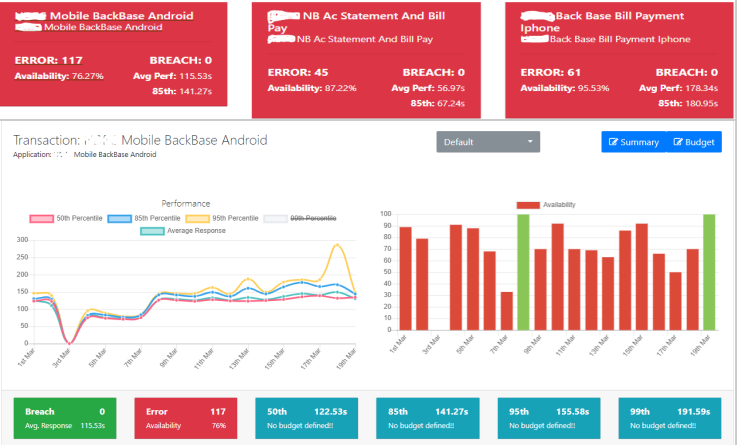

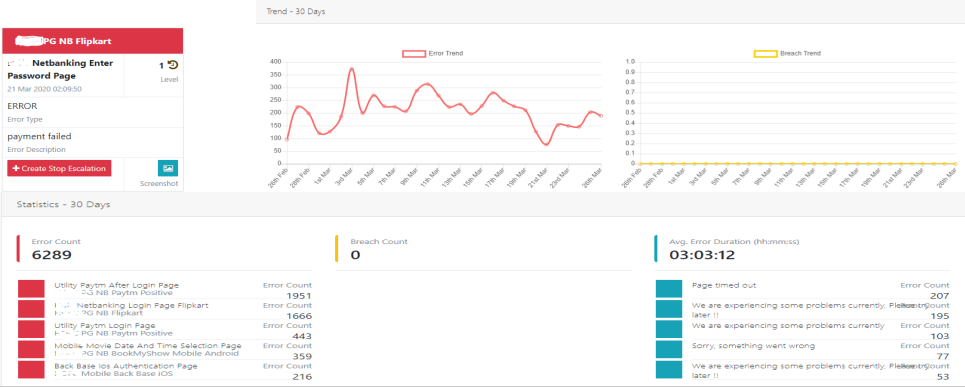

QTRAC is a cloud-based synthetic/ customer experience (CX) monitoring solution that enables you to monitor and manage the performance of your on-premises and cloud applications. It supports the application development through the SDLC (software development cycle) to detect and troubleshoot issues and vulnerabilities as early as possible. Its data processing and analytics capabilities and user-friendly dashboards let you visualize performance issues and take immediate action. 2

The platform also displays errors such as the overall error count, response time breach, average error duration, and error count for a specified duration.

What Makes QTRAC Different?

QTRAC can navigate complex customer journeys including authorizations such as CAPTCHA, Mobile OTP (One Time Password), and 2-Factor authentication. The Continuous Robotic Monitoring platform gives business users real-time visibility into Server, API, switches (Banking), mobile and web application availability, average response time for a specified range, and a drill-down of performance issues to transactions and pages. Apart from the dashboard, clients get notifications on the QTRAC app, via email, SMS, and phone call as well.

In short, synthetic monitoring gives you the same perspective as your end users so you can work towards improving their digital user experience. The same is true for not just mobile banks but any business application that is on their digital transformation journey across verticals—over-the-top media services (OTT), eCommerce, FinTech, food delivery, and super apps.

If you are facing any challenges with your application’s performance or need clear visibility into how your digital product stacks up against others in the marketplace, contact us to get a complimentary competitive and industry benchmarking report.

About the Author

BU Head – Cloud & Service Delivery Excellence at QualityKiosk Technologies

Sanjeev is a seasoned IT professional with more than 24 years of experience in developing, executing, and enabling operational and quality excellence. At QualityKiosk, he has implemented several strategic, continuous innovation and improvement initiatives, and is responsible for seamless and consistent delivery of cloud infrastructure and digital transformation engagements to clients globally. Prior to joining QualityKiosk, Sanjeev had held leadership stints with BNP Paribas India, Datamatics Global Services, Mastek, and Deloitte. Sanjeev has earned his bachelor’s degree in Computer Engineering from the University of Mumbai.

TO CONNECT WITH SANJEEV CHUGH, EMAIL TO: LETSCONNECT@QUALITYKIOSK.COM es